Pneuron’s Cerebral Approach to Business Intelligence Focuses on Easy-to-Use Self-Service

One of the biggest challenges organizations face is to realize the benefits and value of their business intelligence investments. One major factor for this is the amount of time that it takes to obtain actionable results from data for important business-oriented decision-making. This can be prolonged many weeks and sometimes up to months due to complete systems being rebuilt or integrating large amounts of data between data warehouses. An innovative BI company called Pneuron has built a product called the Pneuron Distributed Platform that has helped many companies shorten the lag time of data warehousing and data migration through a concept they call prescriptive analytics. To learn more about this powerful platform, I was fortunate enough to interview Tom Fountain, CTO of Pneuron, to get a complete understanding about the Pneuron Distributed Platform and what it can do.

+ Check Out a Free 2015 Business Intelligence Tools Buyers Guide

SR: Can you tell me a little about the Pneuron Distributed Platform?

Tom Fountain: Sure. The Pneuron Distributed Platform was actually built from a technology that came out of the NSA which had to do with building a non-invasive integration layer that could target and extract points of value from a widely disparate set of underlying systems and bring those together in a way to effectively build a solution.

SR: What key benefits does the Pneuron Distributed Platform provide for your customers?

Tom Fountain: Fundamentally our critical message to customers is to cut time-to-value and total cost of ownership in half, but retain the agility needed to deal with rapidly changing business conditions.

SR: What major problem does the Pneuron Distributed Platform solve?

Tom Fountain: The problem is that when companies try to solve a business requirement they end up having to integrate anywhere from four to six different product categories worth of functionality to solve the problem. What we do is provide a single platform with key functionalities from a handful of different product categories all embedded in one platform so that the solution designer can focus on the functional design, completing the design deployment, at a logical configuration level with no low-level coding which allows you to mix and match these disparate services. We call them “pneurons” and they basically craft a distributed process network that can solve business problems.

SR: What product categories would you say is included in this single platform?

Tom Fountain: In a way we are like SOA because we extract the complexity of the underlying system by handing the low level details for you. We are also like an analytics tool because we have native capability to perform mathematics and other types of analysis and run models. We are an enterprise mashup visualization containing a dashboard tool where you can visualize results. We are a BPM because you build this distributed processing network that works like an orchestrated or automated business process flow.

We are an ETL CDC tool because we target data in underlying systems and we extract out only what’s needed in this specific problem directly from the source system. We don’t build intermediate data stores and data marts because we simply, at run time, grab what we need and run it through the network. The crucial thing is we’ve taken these key best of breed features from multiple categories and embedded them within the technology and we allow the user to build a solution, deploy it, and run it. Lastly, we are also a distributed execution platform so we are in effect homogenizing processing across the compute infrastructure where ever you have installed one of our servers.

SR: What exactly is a “pneuron”?

Tom Fountain: There are a couple of very critical components to the platform. It starts with what we call a pneuron; a pneuron is a mini application, which is purpose specific. It’s simply a java object that is configurable to do a specific task that you at design time configure the behavior of. We have a library of over 50 different pneurons; you can think of them as services as each does a specific element of a broader business solution. So the pneuron library has things like a web services pneuron with access to APIs; we have a query pneuron that connects relational databases; we have a FTP pneuron and a whole host of other data interaction type pneurons so we can source or deliver results to various systems; we have a set of analytical pneurons that can import and run predictive models and rulesML, as well as other pneurons which offer an entire matching library as well as the Apache math. You simply click the correct pneuron, configure its behavior, leverage its unique contribution, and connect it up to other pneurons.

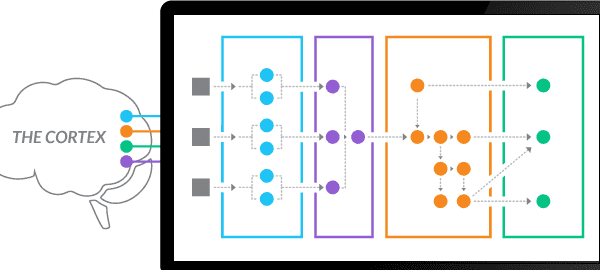

SR: What is a “cortex”?

Tom Fountain: The cortex is our run-time server that provides the processing context. The entire platform is built in Java and we simply run it in a JVM. The Cortex provides processing threads when and only when a pneuron receives a message that is destined for it. The server will serve up those specific sets of instructions and hand the incoming message to that piece of pneuron code where it will execute those instructions against the incoming message. That message might modify its activity and the cortex will take the results that are generated, package it up, and deliver it either in memory into another local pneuron or to a remote Cortex (if additional ones installed) through either web service or a JMS messaging infrastructure. The cortex is really solving distributed computing issues. You can cluster them for availability; you can clone them, or dynamically scale additional ones if you hit a spike in workload. This is really where our patents lie; where the focus of intellectual development is.

SR: What types of solutions are being built with Pneuron?

Tom Fountain: Pneuron is being used in a wide variety of situations where elements of the business solutions reside in highly distributed and disparate sources yet the business requirements demand short time-to-value and retention of solution agility. As a complement to more classic “Big Data” solutions, we are driving dramatic productivity in the disposition of Anti-Money Cases by automating the access, retrieval, and scoring of supplementary data to discern false positives. In situations demanding higher performance we are importing elements of existing solutions, replicating those elements, then creating a parallel and distributed execution model to accelerate throughput (without code re-write).

SR: Can Pneuron work in real-time situations?

Tom Fountain: In cases where real-time processing is required, we typically set up event-driven execution networks that dynamically take targeted data, perform the distributed analytics to classify and route intelligence, and deliver those results to decision-makers and follow-on systems. In this latter case, we are delivering near real-time risk management capability to banks and other institutions because we target data directly in underlying systems, not batch processed, and hence delayed, extracts. Our ability to apply analytics and logic on a streaming basis provides excellent functionality while our non-invasive integration approach retains the required solution agility. This combination is a real for banks dealing with constantly changing business requirements and regulations.

SR: How Does Pneuron address the emerging disciplines of Predictive and Prescriptive Analytics?

Tom Fountain: We offer Pneurons that allow you to import various Predictive models and further augment your solution with imported business rules or directly built logic-based routing. Those capabilities along with our ability to interact directly with Enterprise applications, provides a platform to source critical inputs, execute Predictive logic, assess execution alternatives by interacting with multiple planning systems, then ultimately launch actions directly to the Enterprise transactional systems. This is done within a single platform, without low-level coding, and without the risky, costly and time consuming prerequisites of data and systems integration.