5 Steps to Fast-Track GDPR Compliance: Tips From an Industry Insider

By Majken Sander

Only a few days to go now. The GDPR clock strikes on May 25, 2018. Industry pundits are either ramping up scare stories about the impending GDPR legislation – or totally ignoring it.

Even though it’s not happened yet, a lot of people already seem fed up with it. Some say this is a “draconian” new law which poses a threat to the way all companies operate. Some have even described it as a mechanism placed under businesses that could potentially destroy all the data companies hold, along with their rights to analyze it as they see fit.

But let’s all calm down and look at this more pragmatically. If you’re not already GDPR-ready, here’s a quick way for you to prepare an overview of what you need to do. And if you’re in the middle of your journey, you might still find value in this as a checklist. If you’ve already done everything, then please reach out to me as I really want to hear about all about your process, findings, challenges and how you solved it.

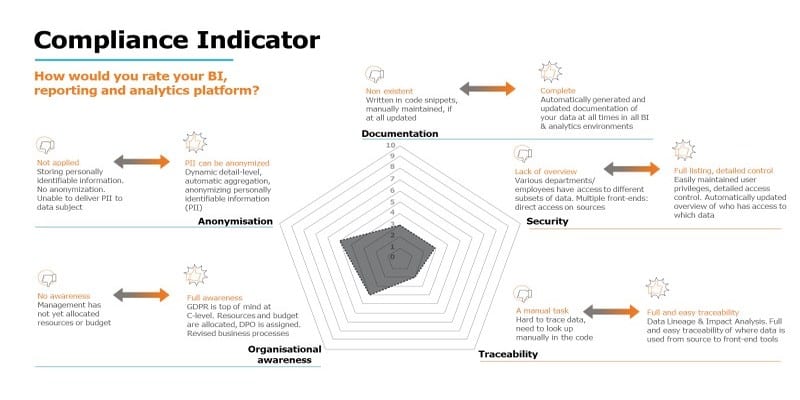

The Compliance Indicator – Solid Overview

To get an overview of the process of obtaining a GDPR-compliant BI and analytics solution, walk through the topics of the compliance indicator diagram as show below. Evaluate this methodology piece by piece and in order to determine your company’s current state.

If, for example, it scores you as being heavily manually driven, as well as unable to anonymize and aggregate, then you’ll know where to focus your attention on.

Some of the measures also suggests that you might benefit from adding some degree of automation to your current BI and analytics environment. Such automation would let you and every employee working with your data focus on the analysis and data usage part, freeing you from the more tedious, repetitious tasks to IT technology solutions. In this way, automation enables your organization to benefit in the largest possible extent from the business value that can be found within your data.

With that, let’s take a look at a complete five-step process that you should follow.

1. Documentation

It all begins with an overview of your current data landscape.

Which data do you hold? Which source systems do they come from? Where is your data stored?

As simple as this may sound, in some cases it might mean having to look into old hand-coded scripts that are currently extracting the data, perhaps performing transformations and then moving the data from the source system to one or more target systems. This can be complicated, especially if the person who created the original scripts no longer works for the organization.

Thankfully, modern BI platforms can automate documentation on demand, which makes it easy for you to deliver up-to-date documentation whenever an audit requires you to do so.

2. Traceability

Could you simply explain to someone where the data you are looking at originates from? Could you answer the questions, “Where does this field originate from?” or “How is this measure calculated?”

For example: Could you say, “The measure ‘Gross’ is calculated based on the amount from each invoice line and the currency as stated on the line, at the rate found in the currency table in the ERP system. It is updated once a month.”

When evaluating traceability, taking the opposite direction can also be helpful. You can ask, “In which measures is this database field from this source system used?” Preferably, you should be able to trace the use all the way to the dashboards, apps and data visualizations it is part of.

A really helpful BI and analytics implementation makes it possible for you to “go both ways” when trying to trace the life of your data. This can be crucial in explaining why you are holding this data.

3. Security

Next, you need an overview of all the people who have access to each specific set of data.

One starting point could be to describe each person by role or job function, to decide which data is relevant to that role and to grant access to them based on their reasons for needing it. When you do this, also remember to include the job functions related to IT personnel, database log files, back-up systems and so on.

4. Anonymization

Let’s consider what we call “anonymization and the ‘right to be forgotten.’” Business processes comes into play here. When a customer contacts your company and asks to either see all the data you store on them or to exercise their “right to be forgotten,” how will your BI and analytics solution handle this request?

Often the customer data is stored in the source systems. Extracting the complete dataset for each individual might be a tiresome task if solved by querying across every relevant system. An alternative solution would be to access your data warehouse to extract the customer data. This leaves the source systems with no load due to customer queries. It would be a lot easier to assemble the data, as you only have to query one place, hence the data warehouse.

Things are a little different when it comes to the “right to be forgotten.”’ In some cases, deleting data that you no longer have any reason to store might bring some ERP systems to a halt since they simply will not accept data being deleted. If this is sorted by the vendor of your source systems, you may have to add parts of the customer’s data to an aggregated data pool in your data warehouse and then delete the detailed dataset.

For example: Sales lines representing one product at a certain price at a specific store are retained, although no customer numbers are attached to it.

If you decide to store detailed data, then decide to what extent you might need aggregated tables and to what extent masking the data will be part of your solution. Does your current BI environment support automatic handling of data masking?

Oftentimes, it makes sense to leave the task of masking data to the data warehouse along with other things that require computational power, such as aggregations and summary tables. This approach leaves the tasks of analysis and visualization to the front-end tools.

5. Organizational Awareness

Management alignment is important and communication and buy-in throughout the organization is essential. Without a focus on commitment from management, becoming GDPR compliant will be an even larger challenge as the process requires people’s attention, money and resources – even more so with the deadline rapidly approaching.

One initiative might require new work process to handle customer requests to see their data. Who will receive these requests, carry out the work and reply to each customer? Since business processes will change, management needs to be aware of this and take control. Personal skill sets might need upgrading, extra hours budgeted for to spend on new tasks and additional software licenses invested in. Maybe now is the time for you to re-think or revise your IT data and information architecture?

Benefits of GDPR Compliance

There really is true value to be found in GDPR compliance. It will encourage us all – every organization that holds data – to take a closer look at which data we register and why we feel the need to hold it.

A proactive approach to this might result in your marketing efforts, not just by letting your customers know that you as a company cares about their privacy, but also by holding only relevant data to target the most active and receptive customers.

Internally, you can reap benefits by performing a health check on your current BI & analytics environment. Now could be the right time for data warehouse automation, governance project and security revision.

Be on the lookout for a BI and analytics platform that supports your future needs by being capable of adding more data and more sources at an ever-faster pace. Look for shorter time-to-data and the freedom of choice when it comes to using several different front-ends and analytical tools and programming languages.

Seek out a platform that makes the most out of your data by supporting the decisions and insights in your company. Go for solutions that automate some of the more tedious tasks, leaving you and your colleagues time to work with the data to make them work for you.

Majken Sander is a data nerd, business analyst, and solution architect at TimeXtender. She is well-known in industry circles as an influential industry executive, international speaker, and accomplished data expert. Majken has worked in IT, management information, analytics, business intelligence and data warehousing for more than 20 years. She is a tech evangelist and blogs on topics like business value of data, Data Warehouse Automation, GDPR, BI and Analytics at TimeXtender’s blog. Majken can be reached at ms@timextender.com.

is a data nerd, business analyst, and solution architect at TimeXtender. She is well-known in industry circles as an influential industry executive, international speaker, and accomplished data expert. Majken has worked in IT, management information, analytics, business intelligence and data warehousing for more than 20 years. She is a tech evangelist and blogs on topics like business value of data, Data Warehouse Automation, GDPR, BI and Analytics at TimeXtender’s blog. Majken can be reached at ms@timextender.com.

Widget not in any sidebars