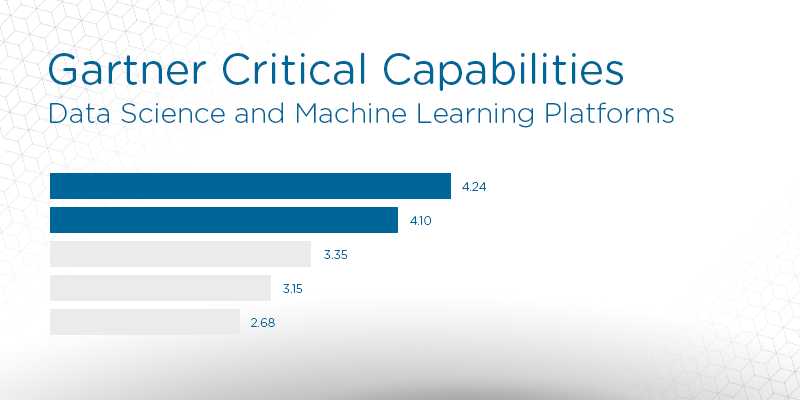

2019 Gartner Critical Capabilities for Data Science and Machine Learning Platforms: Key Takeaways

Analyst house Gartner, Inc. has released its 2019 Critical Capabilities for Data Science and Machine Learning Platforms, a companion research to the popular Magic Quadrant report. Used in conjunction with the Magic Quadrant, Critical Capabilities is an additional resource which can assist buyers of data and analytics solutions in finding the products that best fit their organizations.

Gartner defines Critical capabilities as “attributes that differentiate products/services in a class in terms of their quality and performance.” Gartner rates each vendor’s product or service on a five-point (five points being best) scale in terms of how well it delivers each capability. Critical Capabilities shows you which products are best for each use case and includes a comparison graph for each, along with in-depth descriptions on the various points of comparison.

The study highlights 19 vendors Gartner considers most significant in this software sector and evaluates them against 14 critical capabilities and now four use cases prevalent in the space, including:

- Business exploration

- Advanced prototyping

- Production refinement

- Nontraditional data science

The editors at Solutions Review have read the report, available here, and pulled out three key takeaways.

Gartner recommends avoiding long-term lock-in

Data and analytics leaders are recommended to incorporate data science and machine learning into their BI strategies. However, given the popularity of open source data science software, Gartner tells buyers to avoid long-term lock-in with commercial product vendors. On that same front, organizations can maintain a level of flexibility by investing in both traditional and open source tools. Data science is currently one of the fastest-moving software markets in the world. The organizations that recognize that fact will be better positioned to take advantage while evaluating the solutions to help them generate business insights.

The best data science tool is one that you select based on your use cases, user skills and deployment environment.

Augmented data science is coming

Gartner projects that 40 percent of data science tasks will be automated in the near future. This is perhaps one of the biggest reasons why the researcher suggests avoiding long-term lock-ins with one provider. The open source community simply drives too much innovation in the space. This augmentation will result in better productivity and broader adoption of data science software among non-technical users.

Automation has become the new normal in the analytics and BI space, and has even made its way some of the more technology forward data management platforms in recent months. Software referred to as “augmented” uses machine learning to change how analytic content is developed and used.

TIBCO Software and RapidMiner lead the way

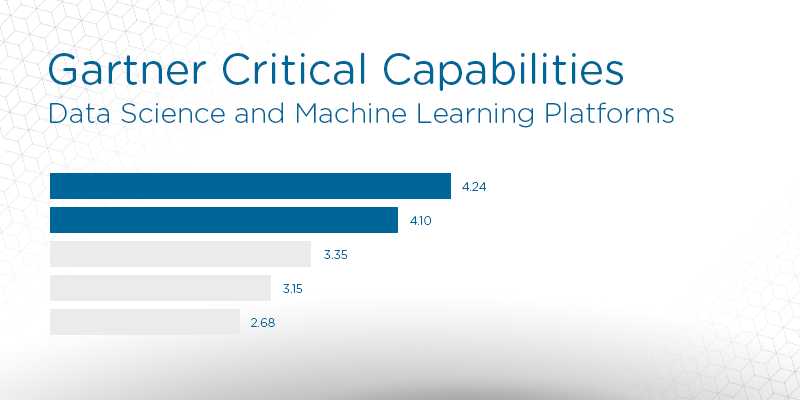

TIBCO Software and RapidMiner scored in the top-two for each of the four data science use cases Gartner covered in the report. TIBCO was tops for business exploration, production refinement and nontraditional data science, while RapidMiner was the class of the advanced prototyping use case. TIBCO offers an open platform with excellent data exploration, visualization and advanced analytic capabilities. Its main applications are in banking, manufacturing and natural resources.

RapidMiner users enjoy the tool’s user interface, as well as data access, machine learning, and extensibility features. Reference customers also score it will for ease of use, speed, model development, and the possibility of managing large numbers of models.

Data science buyers that ultimately selected TIBCO Software or RapidMiner also considered SAS, KNIME, Alteryx and IBM during product evaluation.