Pentaho Unveils Data Integration Enhancements to Future-Proof Big Data

This morning, Pentaho unveiled five new improvements to help help enterprises overcome Big Data complexity, the skills gap and integration challenges in sophisticated environments. The announcement was made at the opening day of the 2016 Strata + Hadoop World conference. Perhaps the most notable feature enhancement present in this product update is an adaptation of SQL on Spark. The improvements help IT teams deliver value from Big Data projects faster, all while allowing organizations to utilize existing resources by eliminating the need for manual coding. This also provides for tighter security and more widespread support for Big Data technology ecosystems.

Pentaho Big Data Integration feature enhancements include:

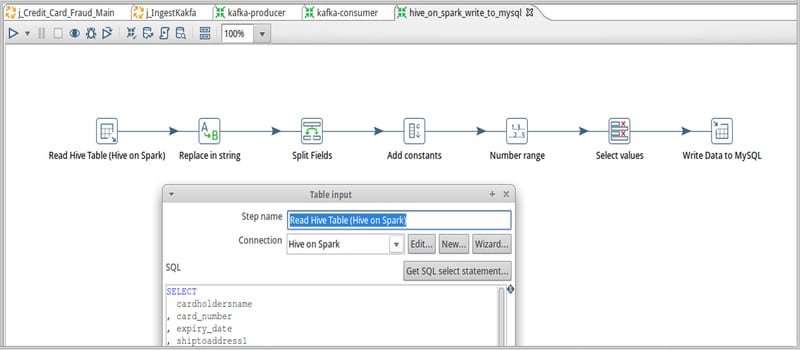

- Expanded Spark integration: Lowers the skill barrier for Spark, flexibly coordinate, schedule, reuse, and manage Spark SQL in data pipelines, and integrate Spark apps into larger data processes to get more out of them.

- Expanded metadata injection tools: Allows data engineers to generate PDI transformations at runtime instead of having to hand-code each data source, reducing costs.

- Expanded Hadoop data security: Promotes better Big Data Governance, protecting clusters from intruders, including enhanced Kerberos integration for secure multi-user authentication and Apache Sentry integration for rule enforcement.

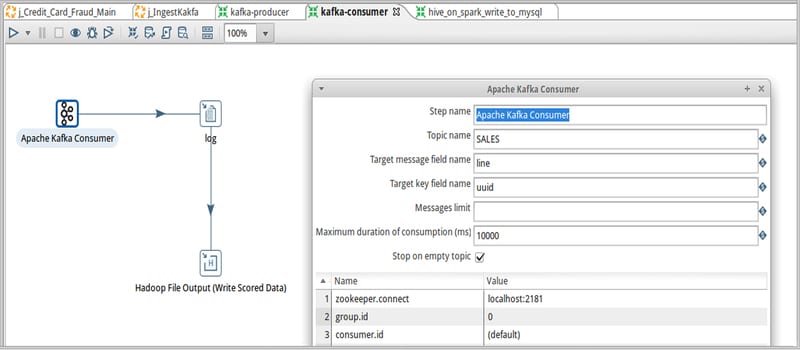

- Apache Kafka support: Provides enterprise customer support to send and receive data from Kafka, allowing users to facilitate continuous data processing use cases in Pentaho Data Integration.

- Increased support for additional Hadoop file formats: Supports the output of files in Avro and Parquet formats in Pentaho Data Integration, both popular for storing data in Hadoop in Big Data onboarding use cases.

Donna Prlich, Senior Vice President of Product Marketing at Pentaho, concludes: “Our latest enhancements reflect Pentaho’s continued mission to quickly make big data projects operational and deliver value by strengthening and supporting analytic data pipelines. Enterprises can focus on their big data deployments, removing the complexity and time involved in data preparation by taking advantage of new, high potential technologies like Spark and Kafka in the big data ecosystem.”

Read the official press release.

Widget not in any sidebars