Syncsort Simplifies Integration of Real-Time Data in Spark, Kafka, Hadoop

Syncsort has announced an update to its Data Integration solution DMX-h to version 9, providing native integration with Apache Spark and Apache Kafka, which allows organizations to access and integrate enterprise-wide data with streams from real-time sources. Version 9 simplifies Spark application development, enabling applications to use the power of an evolving Big Data stack. In addition, DMX-h version 9 securely integrates batch and real-time data streams from Kafka, mainframe, relational databases, and unstructured sources in the same data pipeline supply Hadoop and Spark.

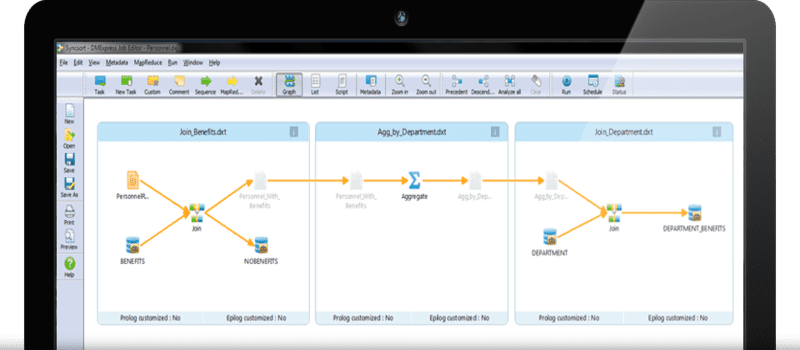

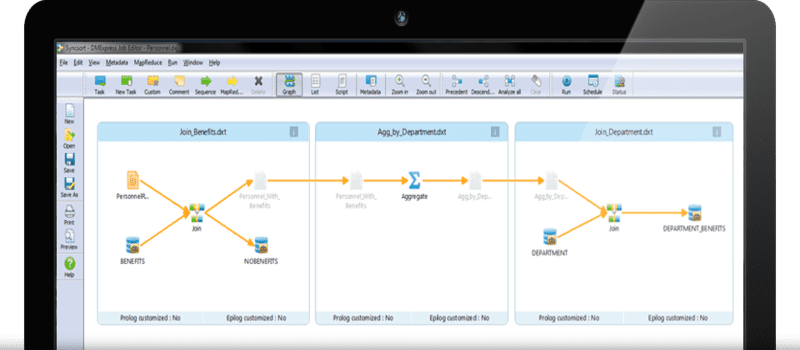

Customers can take jobs that were initially designed for MapReduce and run them natively in Spark as a result of these new updates. Users can run the jobs in Spark by changing the execution framework from a drop-down menu in the graphical user interface without requiring any rewriting or recompiling. This simplifies the process of moving applications from standalone server environments and from Hadoop MapReduce and Spark.

Syncsort DMX-h comes pre-packaged with Intelligence Execution, which plans for the applications at run-time depending on which compute framework is best at that moment. This helps to future-proof applications as Big Data technology stacks evolve. The new capabilities included in version 9 build on Syncsort’s contributions to Spark packages, which allow enterprises to include historical transactional data along with real-time data sources such as mobile and the Internet of Things.

Syncsort’s integration with the Kafka distributed messaging system allows users to leverage DMX-h’s graphical user interface to subscribe, transform, and enrich enterprise-wide data coming from real-time Kafka ques. DMX-h can also publish these datasets to Kafka to simplify the creation of real-time analytics applications by cleansing, pre-processing and transforming real-time streaming data.

Syncsort’s General Manager of Big Data Tendü Yoğurtçu concludes: “Many of our financial, telecommunications, insurance and healthcare customers need an easy way to gather, transform and distribute batch and streaming data coming from multiple enterprise data sources, including Kafka, for advanced analytics in Hadoop and Spark. The new capabilities we are delivering today meet those needs while continuing to provide best-in-class, secure access to mainframe data from within the fastest growing data platforms.”

Click here for the full press release.

Widget not in any sidebars