Syncsort Simplifies Mainframe Big Data Access in Hadoop

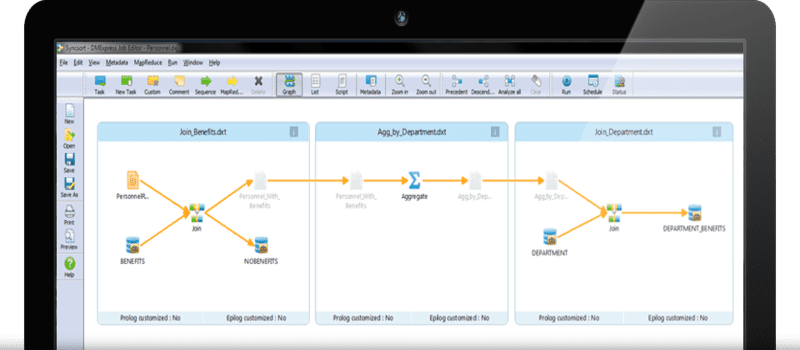

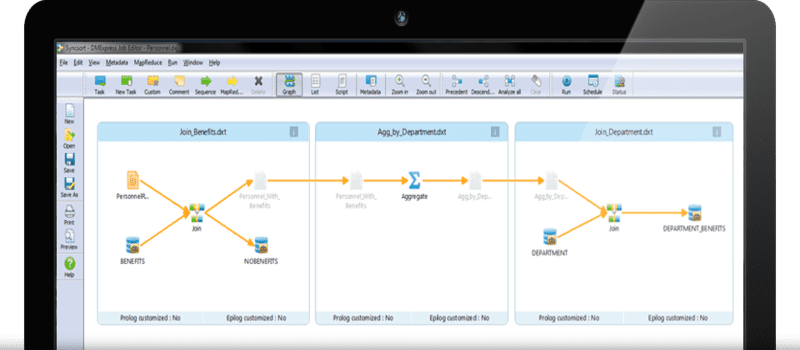

Syncsort announced today new capabilities to its flagship Data Integration software DMX-h, for the first time allowing organizations to work with mainframe data inside Hadoop or Spark in its native format, functionality that is essential for maintaining data lineage and compliance. In addition, Syncsort also introduced the new high-speed DMX Data Funnel, which users can deploy in order to quickly ingest hundreds of database tables from sources like DB2, dramatically reducing the time and effort to populate their enterprise data hubs.

Many companies in highly-regulated industries such as banking, insurance, and hea;healthcare, have struggled with utilizing Hadoop or Spark to cost-effectively analyze massive volumes of mainframe data because they’re required to preserve the data in its original EBCDIC format, which could not be processed inside Hadoop. With Syncsort’s updated offering, these organizations can now leverage the benefits of Big Data platforms to quickly analyse mainframe data just as they do with data from any other source – without the need for specialized skills.

Another significant hurdle facing mainframe users is getting their data into Hadoop. However, with Syncsort’s new Data Funnel function, users can now take hundreds of tables, and in one step, load them right into Hadoop’s Distributed File System.The combination of the new capabilities allows customers to rapidly bring data in its rawest form into a central Hadoop repository, supporting many downstream use cases and facilitate management of essential operation data inside Hadoop.

Tendü Yoğurtçu, General Manager of Syncsort’s Big Data business, adds: “The largest organizations want to leverage the scalability and cost benefits of Big Data platforms like Apache Hadoop and Apache Spark to drive real-time insights from previously unattainable mainframe data, but they have faced significant challenges around accessing that data and adhering to compliance requirements. Our customers tell us we have delivered a solution that will allow them to do things that were previously impossible. Not only do we simplify and secure the process of accessing and integrating mainframe data with Big Data platforms, but we also help organizations who need to maintain data lineage when loading mainframe data into Hadoop.”

For Syncsort’s full press release, click here.

Widget not in any sidebars